Detecting in-focus regions in a low depth-of-field (DoF) image has important applications in scene understanding, object-based coding, image quality assessment and depth estimation because such regions may indicate semantically meaningful objects.

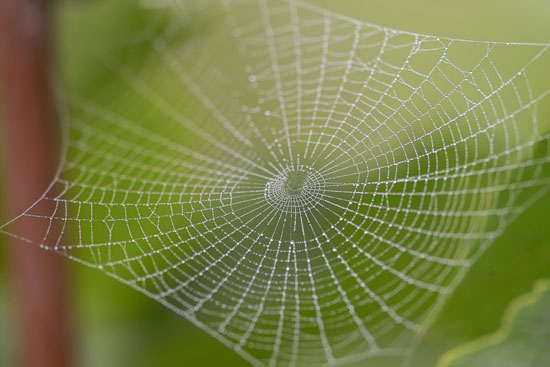

Here I show a challenging example, a spider web. Note that some parts of the defocus regions still demonstrate strong patch variances.

In our paper, we show that by simply using DCT band-pass filtering and harmonic variance, we could get very robust in-focus measurement, as shown in the following picture. The idea is simple. Defocus regions only cover some low frequency bands because of lens blur, while in-focus region cover the full spectrum bands. By using DCT band-pass filtering, we could extract those frequency responses. If we just sum those responses all together, the final estimate could be well biased due to very high responses of just some low frequency channels. Harmonic mean solves this problem by penalizing those outliers and could generate very robust outputs.

In short, this algorithm works by

1) apply 63 DCT band-pass filtering (for 8×8 patches) to the input image, excluding the first DC/average filtering

2) compute patch variance for each 63 filtered images. Now at every pixel location (x, y), we have 63 variance measurements

3) compute harmonic mean of these 63 variance as harmonic variance

4) optionally, we could apply sigmoid function to the harmonic variance image to restrict the outputs to be [0, 1].

If you want to estimate the actual noise level of the input image, please refer to our paper for kurtosis analysis.

import numpy as np

import scipy.misc as misc

import scipy.ndimage as ndimage

import scipy.stats as stats

import matplotlib.pyplot as plt

def Sigmoid(x, ratio) :

return 1 / (1 + np.exp(-ratio * x) )

def Tanh(x, ratio) :

return 2 * Sigmoid(2 * x, ratio) - 1

def GenerateDCT8x8Base() :

N = 8

x = np.array( np.arange(0, N ), dtype=np.float32, ndmin=2 )

C = np.cos( np.transpose(2*x+1) * x * np.pi / (2.0*N) ) * np.sqrt(2.0/N)

C[:, 0] = C[:, 0] / np.sqrt(2.0)

plt.close('all')

plt.figure()

filters = []

for i in range( N ) :

for j in range( N ) :

X = np.transpose(np.array(C[:, i], ndmin=2)) * np.transpose( C[:, j])

im = misc.imresize(X, (128, 128), interp='nearest')

plt.subplot(N, N, i * N + j + 1)

plt.imshow(im, cmap=plt.cm.gray)

plt.axis('off')

filters.append( X )

del filters[0]

return filters

def ComputeHarmonicVariance(im, kernels):

size = list(im.shape)

size.append(len(kernels))

k_size = kernels[0].shape

k_avg = np.ones( k_size, np.float32) / (k_size[0] * k_size[1])

outs = np.empty( size, dtype=np.float32)

for i in range(len(kernels)) :

data = ndimage.convolve(im, kernels[i])

data2 = data * data

EX2 = ndimage.convolve(data2, k_avg)

E2X = np.square(ndimage.convolve(data, k_avg))

outs[:,:,i] = EX2 - E2X

variance = np.empty( im.shape, dtype=np.float32 )

for y in range(size[0]):

for x in range(size[1]):

data = outs[y, x, :]

variance[y, x] = stats.hmean(outs[y,x,:]+1e-8)

return variance

im = misc.imread('Spider-Web-Macro-Photography.jpg').astype(np.float32)

im = im[:,:,0] # extract Red channel for demonstration

kernels = GenerateDCT8x8Base()

hv = ComputeHarmonicVariance(im, kernels)

plt.figure(), plt.imshow(Tanh(hv, 0.01), cmap=plt.cm.gray), plt.title('hv t 0.01')